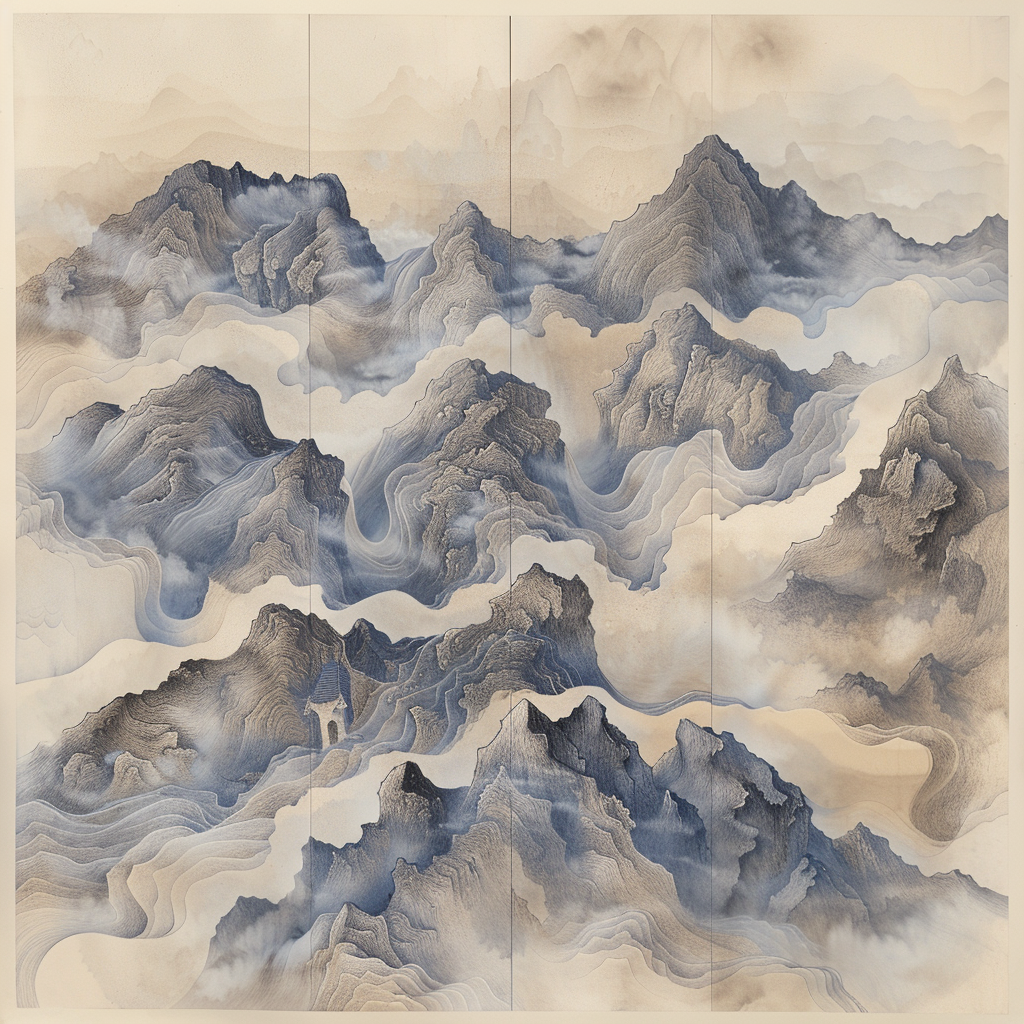

I still have that era on my wall

I made art during the early Midjourney wave and printed several pieces. They are still on my wall, and I am genuinely glad I kept them. At the time, the outputs felt wild and beautiful, but also surreal and broken.

Prompt-to-image changed our evenings

Some of my favorite memories are from evenings when my boys would describe something wild, I would turn it into a Midjourney prompt, and then we would wait together while the image rendered. They would dance around the room waiting to see what showed up.

That loop still means a lot to me because it became family time.

"We made this" mattered

Some images were printed to regular paper so they could carry them around and pin them in their art spaces. That ownership feeling was real. It felt like something we made together, not something handed to us by a model.

That messy phase helped more than we realized

Looking back, those artifacts worked like accidental disclosure. Even without labels, we could usually tell: this is synthetic. The weirdness itself created a trust boundary.

This sits close to the disclosure dynamics I mapped in the AI disclosure gap is here, where synthetic production quality began outpacing disclosure behavior.

Now the images are better, and trust got harder

Current image and video generation is good enough that "is this real?" is no longer a casual thought. The obvious visual tells are often gone.

I am not scanning for weird fingers anymore. I am asking myself what I can actually trust.

Running weekly research made this sharper

When Midjourney was the leading model, part of what we tested at McQueen Analytics was whether people could tell if an image was stock art or AI. Even running those questions in survey form felt surreal.

Each week we linked current events, trust questions, and image-recognition tests. What stood out: confidence rose faster than certainty once images looked polished.

That was one of my favorite research phases because everything was changing while we measured it.

This creation phase is already being replaced

This shift goes beyond cleaner images. The tools we build around are changing under our feet. And in this case, it now looks like the Sora chapter is closed entirely, not just one model version.

Reported on March 24-25, 2026: OpenAI is shutting down Sora as a service, including the Sora app and API. That is exactly the turnover this post is pointing at. You can build around a tool, and still watch the ground shift fast.

Sources: NBC News, Sora official account on X, WIRED.

My takeaway

I love those early broken images even more now. They document the last phase where synthetic media often announced itself.

The next phase needs stronger disclosure norms, provenance tools people can actually use, and better language for uncertainty. Without that, we normalize quiet trust erosion as background noise.